🧠 Neuromorphic Vision

Neuromorphic vision represents a paradigm shift in visual sensing, inspired by biological visual systems. My research focuses on leveraging spike cameras and event cameras to capture high-speed visual information with unprecedented temporal resolution.

First-Author Papers

### SpikeReveal: Unlocking Temporal Sequences from Real Blurry Inputs with Spike Streams

**NeurIPS 2024 - Spotlight**

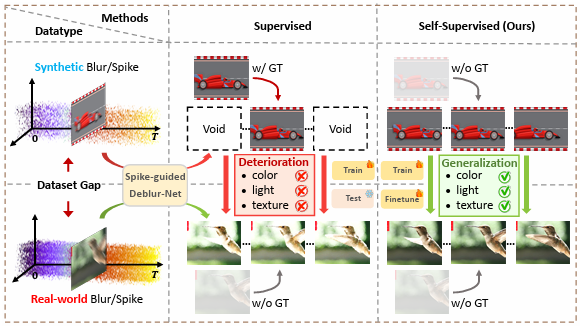

We develop a self-supervised spike-guided image deblurring framework that addresses the synthetic-to-real domain gap. The core insight is that spike streams encode precise temporal information about scene motion, enabling sharp frame reconstruction without ground truth supervision.

**Key Contributions:**

- Self-supervised deblurring without paired training data

- Theoretical analysis of spike-blur image fusion

- State-of-the-art on real-world blurry datasets

\[[Paper](https://arxiv.org/abs/2403.09486)\] \[[Code](https://github.com/chenkang455/S-SDM)\]

### SpikeCLIP: Rethinking High-speed Image Reconstruction Framework with Spike Camera

**AAAI 2025**

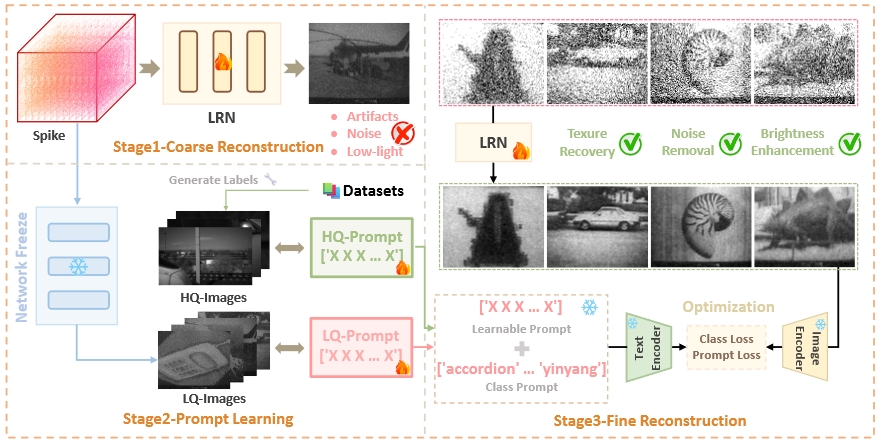

We introduce CLIP-based supervision for spike-to-image reconstruction. Instead of relying solely on pixel-level supervision, we leverage CLIP's semantic understanding to guide reconstruction, achieving competitive performance with a lightweight network.

**Key Contributions:**

- First CLIP-based supervision for spike reconstruction

- Lightweight network with strong performance

- High-quality image generation pipeline

\[[Paper](https://arxiv.org/abs/2501.04477)\] \[[Code](https://github.com/chenkang455/SpikeCLIP)\]

### TRMD: Motion Deblur by Learning Residual from Events

**IEEE TMM 2024**

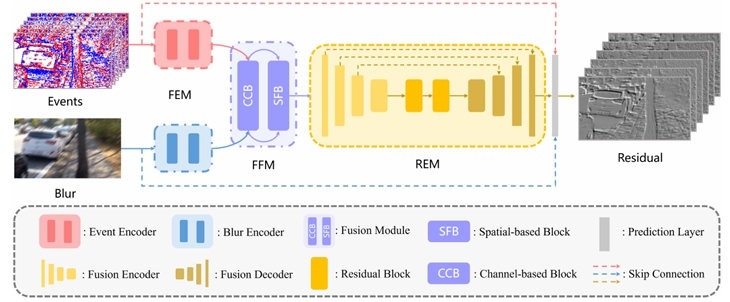

We propose a Two-Stage Residual-based Motion Deblurring framework for event cameras. Rather than directly predicting sharp images, we estimate residual sequences that bridge blurry inputs and sharp outputs.

**Key Contributions:**

- Two-stage residual-based deblurring framework

- Residual sequences as intermediate supervision

- Effective event-image fusion strategy

\[[Paper](https://ieeexplore.ieee.org/document/10403964)\] \[[Code](https://github.com/chenkang455/TRMD)\]

Related Projects

### Spike-Zoo: A Toolbox for Spike-to-Image Reconstruction

An open-source benchmark providing state-of-the-art pretrained models for spike-to-image reconstruction tasks.

\[[GitHub](https://github.com/chenkang455/Spike-Zoo)\] \[[Doc](https://spike-zoo.readthedocs.io/)\]