👋 Hi there!

Here is Kang Chen (🤖💍👸). In 2023, I cooperated closely with Prof. Lei Yu on the task of event-based motion deblurring. Now, I am pursing a Ph.D. degree in Artificial Intelligence at Peking University under the guidance of Prof. Tiejun Huang and Prof. Zhaofei Yu. My research interests involve neuromorphic vision, 3D vision and RL for VLA. If you would like to communicate, feel free to contact me via email at mrchenkang@stu.pku.edu.cn.

🔬 Research Interests

Neuromorphic Vision

Leveraging spike and event cameras for high-speed imaging, motion deblurring, and temporal sequence reconstruction.

Primary Focus3D Vision

Developing novel 3D reconstruction approaches using Gaussian Splatting with neuromorphic sensors.

Active ResearchRL for VLA

Building efficient reinforcement learning frameworks for Vision-Language-Action models.

Core Expertise🔥 News

📝 Publications

πRL: Online RL Fine-tuning for Flow-based Vision-Language-Action Models

Kang Chen, Zhihao Liu, Tonghe Zhang, Zhen Guo, Si Xu, Hao Lin, Hongzhi Zang, Quanlu Zhang, Zhaofei Yu, Guoliang Fan, Tiejun Huang, Yu Wang, Chao Yu

- We introduce πRL, the first open-source framework for efficient RL fine-tuning with flow-based VLAs.

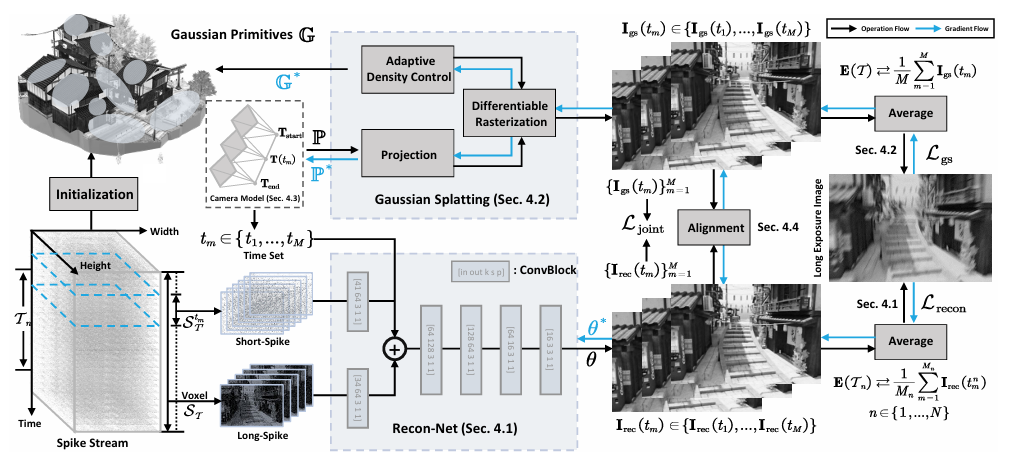

USP-Gaussian: Unifying Spike-based Image Reconstruction, Pose Correction and Gaussian Splatting

Kang Chen, Jiyuan Zhang, Zecheng Hao, Yajing Zheng, Tiejun Huang and Zhaofei Yu

- We demonstrate that Spike-to-Image and 3D reconstruction tasks can mutually facilitate and enhance the optimization of each other.

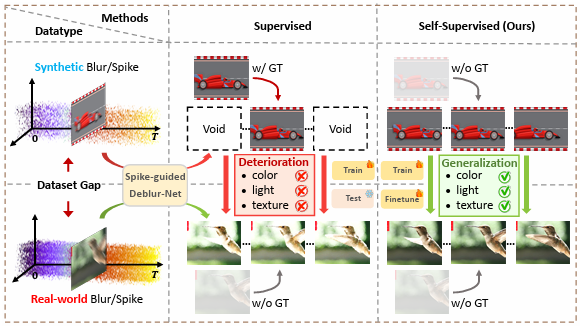

SpikeReveal: Unlocking Temporal Sequences from Real Blurry Inputs with Spike Streams

Kang Chen, Shiyan Chen, Jiyuan Zhang, Baoyue Zhang, Yajing Zheng, Tiejun Huang and Zhaofei Yu

- We develop a self-supervised spike-guided image deblurring framework, addressing the performance degradation due to the synthetic-real domain gap in supervised methods.

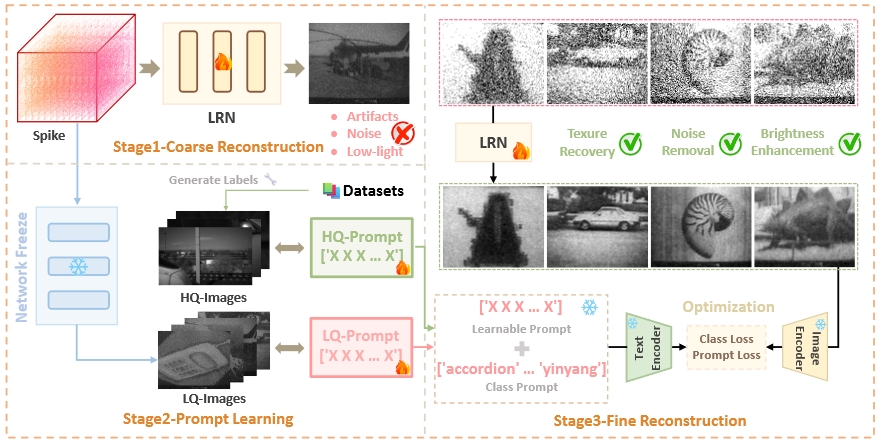

Rethinking High-speed Image Reconstruction Framework with Spike Camera

Kang Chen, Yajing Zheng, Tiejun Huang and Zhaofei Yu

- We introduce a novel spike-based image reconstruction framework, which leverages the CLIP model to supervise the network training by the class label of the captured object and the features of high-quality images.

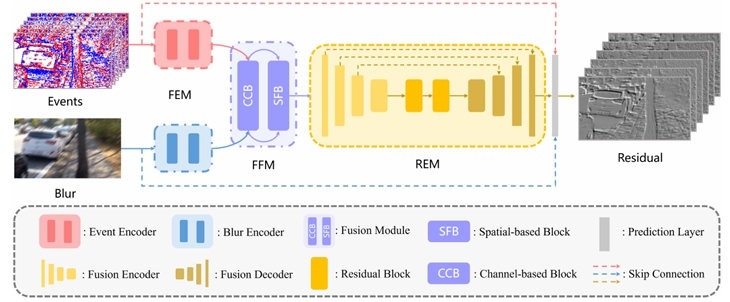

Motion Deblur by Learning Residual from Events

Kang Chen and Lei Yu

- We propose a Two-Stage Residual-based Motion Deblurring (TRMD) framework for event cameras, which utilizes the residual sequence as the intermediate variable, providing a stronger supervision signal for network training.

⚒️ Projects

💻 Services

Conference Reviewer

- Computer Vision and Pattern Recognition

- Conference on Neural Information Processing Systems

- International Conference on Learning Representations

- AAAI Conference on Artificial Intelligence

- ACM Multimedia

🍹 Misc

|

|

|

|